ICO publishes new AI and biometric tech strategy

The UK’s Information Commissioner’s Office has launched a new AI and biometrics strategy to ensure organisations are developing and deploying new technologies lawfully.

The ICO is stepping up its supervision of AI and biometric technologies to strengthen public trust that even the most innovative products and services are using their personal information responsibly.

New research on automated decision making and biometric technologies reveals that the public expect to understand exactly when and how AI powered systems affect them, and they are concerned about the consequences when these technologies go wrong.

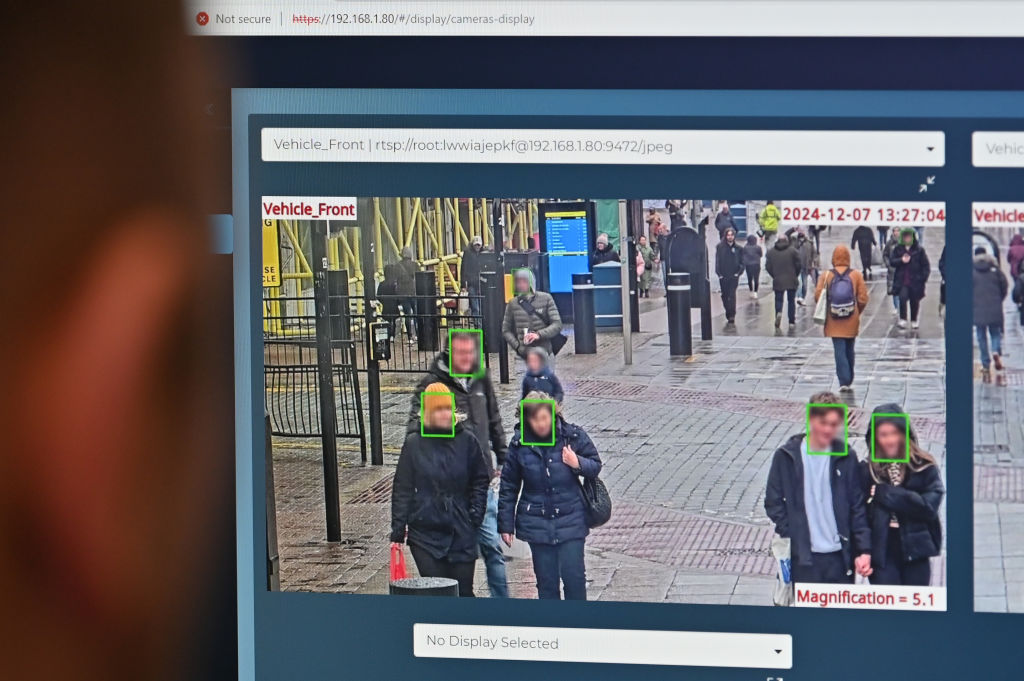

This would include, for example, if facial recognition technology (FRT) is used inaccurately, or a flawed automated decision impacts their job application.

Over half (54%) of people surveyed shared concerns that the use of FRT by police would infringe on their right to privacy.

The ICO is focusing on uses of AI and biometrics that are prevalent today and may benefit people’s everyday lives yet cause the most concern and potential for harm if misused.

The strategy aims to provide organisations with certainty and the public with reassurance across a range of use cases.

This includes reviewing the use of automated decision making systems in the recruitment industry and government adopters such as the Department for Work and Pensions.

Further, the ICO will be conducting audits and producing guidance on the lawful, fair, and proportionate use of facial recognition technology by police forces.

Recommended reading

The ICO will also set clear expectations to protect people’s personal information when used to train generative AI foundation models, as well as developing a statutory code of practice for organisations developing or deploying AI responsibly to support innovation while safeguarding privacy.

Finally, the ICO will be scrutinising emerging AI risks and trends, such as the rise of agentic AI as systems become increasingly capable of acting autonomously.

“The same data protection principles apply now as they always have – trust matters and it can only be built by organisations using people’s personal information responsibly,” the information commissioner, John Edwards said.

“Public trust is not threatened by new technologies themselves, but by reckless applications of these technologies outside of the necessary guardrails. We are here, as we were 40 years ago, to make compliance easier and ensure those guardrails are in place.”

Related

link