Deep learning for network security: an Attention-CNN-LSTM model for accurate intrusion detection

This work assessed the proposed hybrid Attention-CNN-LSTM model using two benchmark datasets, Bot-IoT as well as NSL-KDD. It evaluated the performance using several measures, including recall, MCC, accuracy, precision as well as F1-score. With high classification accuracy and robust detection of multiple attack types, the model displayed outstanding performance across both datasets. These findings demonstrate that, in comparison to conventional models, the hybrid method significantly improves intrusion detection.

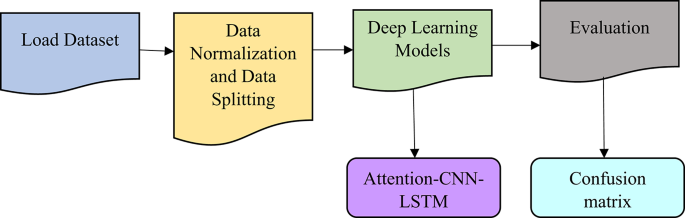

The key hyperparameters and architectural settings used to develop and train the proposed Attention-CNN-LSTM model are tabulated in Table 2. The model uses the widely used Adam optimizer owing to its efficiency in DL tasks, with a learning rate of 0.001 which ensures stable convergence. Based on experimental tuning, a batch size of 128 and 10 training epochs are chosen to balance accuracy and training time. A dropout rate of 0.3 is applied, which prevents overfitting. The CNN layers use 64 and 128 filters with kernel sizes of 3 and 5, which capture the input data local patterns. Max pooling with a window size of 2 is applied to reduce spatial dimensions while preserving important features.

The LSTM layer has 64 units, which allows it to effectively learn temporal dependencies in network traffic. An attention layer using a scaled dot-product mechanism helps the model focus on important features during training. Z-score normalization is used to standardize every feature, and the dataset is split into 80% for training and 20% for testing to evaluate model generalizability.

Evaluation metrics

Precision, recall, and F1-score offer a more in-depth look at how well the model works in recognizing both normal and attack traffic, particularly in situations when there is a class imbalance. Accuracy gives a basic notion of overall performance. MCC offers a comprehensive assessment of the model’s performance, even in cases with highly unbalanced datasets, by appropriately evaluating both false positives along with false negatives. This work evaluated the proposed hybrid Attention-CNN-LSTM model’s efficacy in detecting network intrusions using the following metrics. By dividing the proportion of properly predicted instances (both normal as well as attack traffic data) by the total number of instances in the data set, accuracy assesses the overall performance of the model. This may get a broad idea of how well the model is doing using this simple statistic in Eq. (11).

$$\:Accuracy=\frac{TP+TN}{TP+TN+FP+FN}$$

(11)

.

Where TP is True Positive (correctly predicted attack), TN is True Negative (correctly predicted normal), FP denotes False Positive (incorrectly predicted attack) and FN gives False Negative (incorrectly predicted normal). Precision is the ratio of attack occurrences accurately anticipated (TP) to the total number of attack instances predicted (TP + FP) For situations where reducing false alarms is paramount, it shows the percentage of projected attack occurrences that were real attacks in Eq. (12).

$$\:Precision=\frac{TP}{TP+FP}$$

(12)

.

The proportion of real attack events that the model successfully recognized is measured by recall. If capturing as many attacks as possible—notwithstanding the possibility of false positives—is the objective, then this statistic becomes crucial in Eq. (13).

$$\:Recall=\frac{TP}{TP+FN}$$

(13)

.

To achieve a balance between recall and accuracy, the F1 score takes the harmonic mean of the two. For instance, when one class (regular traffic) is significantly more numerous than the other (attacks), the unequal distribution of the two classes can be quite beneficial. With a flawless recall and accuracy of 1, the F1 score can only be a 1 in Eq. (14).

$$\:F1-score=2.\frac{Precision.Recall}{Precision+Recall}$$

(14)

.

The MCC produces a fair assessment of classification performance when it considers all four parts of the confusion matrix—TP, TN, FP, and FN. It considers both FP as well as FN, making it especially helpful for assessing performance on datasets that are unbalanced in Eq. (15).

$$\:MCC=\frac{TP.TN-FP.FN}{\sqrt{\left(TP+FP\right)\left(TP+FN\right)\left(TN+FP\right)\left(TN+FN\right)}}$$

(15)

.

MCC values range from − 1 to + 1, where + 1 specifies perfect detection, 0 specifies random detection and − 1 specifies total disagreement between detection and truth.

Table 3 shows the performance metrics for Bot-IoT dataset. According to the proposed Attention-CNN-LSTM model, it correctly identifies 97.5% of network traffic events, which is more than the CNN (91.2%), LSTM (92.5%), and DNN (90.4%) models25. Owing to the hybrid model’s capacity to identify attacks more robustly, it is able to grasp both spatial (CNN) as well as temporal (LSTM) patterns in the data, which results in higher accuracy. Precision measures the accurate attack predictions proportion among complete positive predictions. The proposed Attention-CNN-LSTM model gives better results than other existing models, by achieving 96.3%. The challenge in network ID lies in minimizing false positives, an area where the proposed Attention-CNN-LSTM model appears to excel. This implies that the model misclassifies fewer typical occurrences as attacks.

Recall evaluates the model’s precision in identifying genuine attacks, also known as true positives. With a recall of 95.2%, the proposed Attention-CNN-LSTM model successfully detects the vast majority of attacks in the dataset. The hybrid model does better than its parts, LSTM and CNN, which have good recall rates of 91.0% and 89.7%, respectively. Because it can predict network traffic spatially and sequentially. Another important parameter is the F1 score, which compares the models’ recall and precision; the proposed Attention-CNN-LSTM model has the best F1 score at 95.7%. This provides a thorough assessment of the model’s performance by showing that it strikes a balance between accurately detecting attacks (recall) and minimizing false positives (precision).

With an MCC of 0.92, the proposed Attention-CNN-LSTM model shows a very positive relationship between its predictions and the real labels. With MCC values of 0.83 along with 0.85, respectively, for DNN as well as CNN models, the MCC becomes much more relevant in unbalanced datasets such as ID datasets. The proposed model’s higher MCC shows that it handles positive and negative class predictions more reliably. As a result, the proposed Attention-CNN-LSTM model does a better job of detecting network intrusions in both spatial and temporal than the current models, according to all evaluation criteria.

Table 4 displays the NSL-KDD dataset performance metrics details. The proposed Attention-CNN-LSTM model outperforms the CNN (91.5%), LSTM (92.0%), and DNN (89.9%) models in terms of the proportion of correctly classified network traffic examples, with an accuracy of 94.8%. The improved precision is due to the hybrid method’s integration of CNN’s spatial pattern recognition capabilities with LSTM’s ability to identify temporal relationships in network traffic. Precision measures the proportion of accurately anticipated attacks (true positives) to all expected attacks. Outperforming the other models, the proposed Attention-CNN-LSTM achieves an accuracy of 93.7%. This is significant for reducing the number of false alarms in IDSs, as it demonstrates the model’s proficiency in identifying genuine attacks with minimal false positives.

The recall measures the model’s ability to recognize real attack incidents. The proposed Attention-CNN-LSTM model achieves a better recall (92.5%) compared to the CNN, LSTM, and DNN models. It must not overlook attacks, and this demonstrates the hybrid model’s superiority in identifying attacks with fewer false negatives. The proposed Attention-CNN-LSTM model attains an impressive F1 score of 93.1%, which measures the model’s ability to accurately detect attacks while simultaneously minimizing the false positives count. This model guarantees minimal false alarm rates and outstanding detecting capabilities, making it a substantial advance over the others. The predicted and actual labels show a significant positive correlation with an MCC of 0.89 for the proposed Attention-CNN-LSTM model. Compared to the DNN (0.81), CNN (0.84), and LSTM (0.86) models, this is a significant improvement. For unbalanced datasets such as NSL-KDD, the MCC is a useful tool for evaluating models, as the hybrid model is excellent at handling both positive and negative class predictions.

Consequently, the proposed Attention-CNN-LSTM model consistently outperforms all other models’ performance indicators. By looking at both the spatial as well as temporal aspects of network traffic, along with attention mechanisms and batch normalisation, it makes multiclass classification tasks like NSL-KDD much more accurate, precise, recall, and robust overall. It is able to identify various types of attack with outstanding effectiveness.

Table 5 presents a comprehensive comparison between the proposed Attention-CNN-LSTM model and several recent state-of-the-art DL models for intrusion detection, evaluated on both the Bot-IoT and NSL-KDD datasets. The proposed model continuously outperforms all the existing methods across all metrics. Particularly, the hybrid approach of combining CNN, LSTM, and attention mechanisms allows the proposed model to achieve 97.5% accuracy on Bot-IoT and 94.8% on NSL-KDD. These results highlight the generalizability and robustness of the proposed model across diverse datasets and network environments. Specifically in class-imbalanced scenarios, which are common in cybersecurity data, the MCC values of 0.92 (Bot-IoT) and 0.89 (NSL-KDD) strengthen the model’s reliability. To ensure statistical robustness, paired t-tests over 5-fold cross-validation results are applied. The differences in accuracy and F1-score between Attention-CNN-LSTM and baseline models were statistically significant (p < 0.01), validating the observed improvements.

Discussions

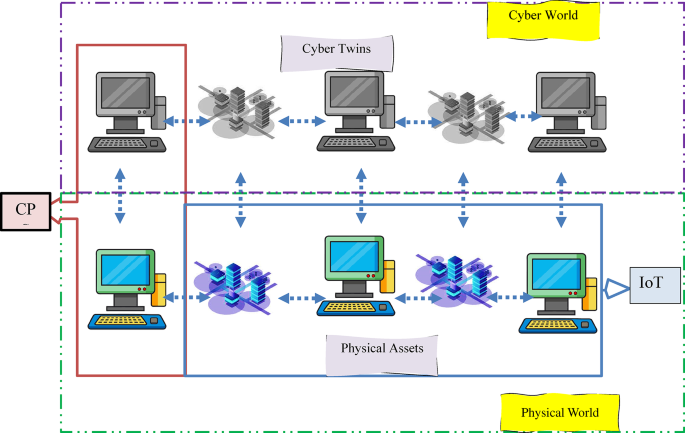

The proposed hybrid Attention-CNN-LSTM model is a great tool for network security. It is much better than existing ML as well as DL grounded ID methods in many important ways. The proposed method can efficiently manage the network traffic features including spatial and temporal, which is one of its main breakthroughs. While LSTMs thrive at processing sequential data, traditional models such as CNNs excel at collecting spatial features. The proposed hybrid method combines the two models to handle network traffic data in a way that takes into consideration both the spatial properties of individual packets and their temporal sequence. The model may recognize complex attack patterns, including both individual and sequential features, such as DDoS attacks or botnet behaviors.

Another noteworthy improvement is the incorporation of the attention mechanism. In real-world network settings, a significant amount of irrelevant data may conceal certain patterns or abnormalities that are more suggestive of an attack. To improve detection speed and accuracy, the attention technique enables the model to zero in on important attack features while ignoring unnecessary data. Having the ability to prioritize features is quite helpful for multiclass classification assignments, especially when dealing with diverse sorts of attacks that might have intricate patterns. The proposed model is now better equipped to handle the difficulties of huge and noisy data sets like NSL-KDD as well as Bot-IoT owing to the addition of batch normalization. By guaranteeing that each network layer gets input data in a constant range, batch normalization helps stabilize the learning process. When dealing with unbalanced or noisy data, as is prevalent in real-world network traffic situations, this leads to quicker convergence, increased training stability, and a lower danger of overfitting.

Improvements in the model’s multiclass classification performance are also noteworthy. Identifying different kinds of attacks may be challenging for traditional models like DNN, LSTM, and CNN, particularly in cases when the distribution of classes is uneven. It is better at handling the Bot-IoT and NSL-KDD datasets’ multiclass nature because it has an attention mechanism built in and can get rich features from them using CNN and LSTM layers, among other things. Compared to conventional approaches, the model achieves better results in terms of accuracy, precision, MCC, recall as well as F1-score indicating that it is more capable of detecting a diverse array of attacks. Scalability in real-time network contexts is another possible outcome of the hybrid paradigm. The model’s Batch normalization and attention methods ensure the model’s ability to comprehend and react to developing attack patterns, while DL techniques like CNN and LSTM enable it to manage large-scale traffic data. Improve this model for real-time IDs, where precise and quick detection is crucial to thwart complex cyberattacks.

The attention layer introduces a modest computational overhead (around a 6% increase in training time) but significantly improves interpretability and accuracy. By weighing critical time steps and spatial features, it enhances detection accuracy by 2–3% in both datasets. Real-time viability is assessed using simulated network streams. The model processed approximately 1250 records per second on an NVIDIA RTX 2080 Ti, with an average inference latency of 32 ms per sample. While this is suitable for medium-scale networks, optimization such as model pruning and quantisation is necessary for high-throughput settings.

Ablation study

Table 5 reports the results of an ablation study designed to assess the contribution of each component of the Attention-CNN-LSTM model. The complete model clearly performs best across all evaluation metrics and both datasets. Removing the attention mechanism (CNN + LSTM only) results in a noticeable drop in both accuracy and F1 score, showing that attention is vital for focusing on important patterns in network traffic. Similarly, standalone CNN and LSTM models do not perform better than the complete hybrid model, confirming the benefit of capturing both spatial and temporal relationships. This evidence validates the architectural design decisions made in constructing the proposed model.

By dynamically weighting the importance of different time steps and spatial features, the attention mechanism plays a central role in enhancing the model’s performance. In existing CNN-LSTM architectures, all features contribute equally, which dilutes the relevance of vital attack indicators. The model learns to focus on the most informative patterns. By joining attention, it is especially useful for detecting complex or subtle intrusion behaviors. The ablation study in Table 6 confirms that the attention layer contributes to a 3–4% improvement in F1-score and MCC, justifying its inclusion. This selective focus on feature relevance offers a new advantage over existing IDS frameworks that lacked such adaptive prioritization.

link

%20(1).webp)